Hello folks!

Mika suggested that we, the ATRO development team members, kept a DevBlog on the progress we are making on the web crawler. We thought this was a rather nice idea and a good way to document what we are doing and keep everyone up to speed on the development progress.

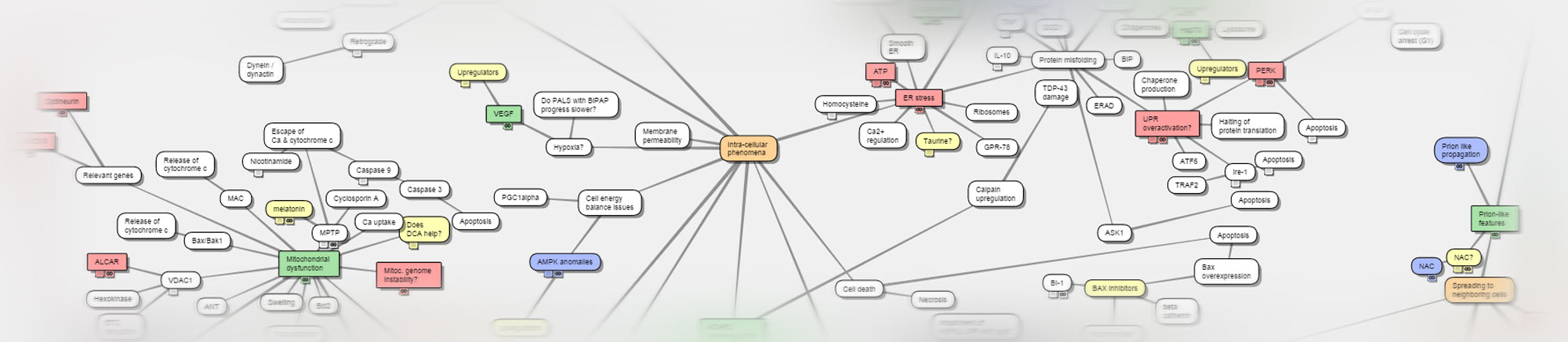

Since this is the very first post we are writing, a short introduction to our team is certainly in order. We are: Jussi Roberts, Joni Nissinen, Joona Toivanen and Miikka Ronkainen. We are all master’s level computer science students from the University of Oulu and we have undertaken this project as part of our studies. When we enrolled for this course we had the option to express our preferences on which project we were allocated to. When we saw that we would have a chance to help researchers in their effort to study ALS, and to use our skills and interest to develop something meaningful we were all very excited.

The first step of our project was to research existing open source solutions as a basis for the web crawler and database. We found several options and eventually decided to develop the crawler with a Python crawler framework called Scrapy. Python is a popular and powerful scripting language, and Scrapy is the most commonly used, highly modifiable crawler framework available.

For the database we explored various NoSQL solutions, but none of them suited our needs. NoSQL really shines with unstructured, rapidly expanding big data, but the nature of our data is quite the opposite. Since we are mining textual meta-data of academic articles, the schemantics of our database are quite clear, and as a result we have decided that a relational database management system (RDBMS) is the way to go. The exact database system is still undecided, but we are leaning towards MariaDB and PostgreSQL. They are both open-source, conform to SQL standards, scale well and are fast. But the research continues ….

We will update this blog as our project progresses, hopefully once every two weeks.

All the best,

Jussi, Joni, Joona & Miikka

Sounds good. Tsemppiä!

Thanks Tero!